Abstract: The American Society of Manufacturing Engineers (SME) Vision Branch and the American Robot Industry Association (RIA) Automation Vision Branch define machine vision as: the automatic acceptance and processing of an image of a real object through optical devices and non-contact sensors , to obtain the required information or to control the movement of the robot/machine. In layman's terms, it is to use a machine to replace the human eye for measurement and judgment.

Machine vision was first proposed in the 1960s. It was not until the 1980s and 1990s that machine vision ushered in vigorous development, and after the 21st century, machine vision technology entered a mature stage.

According to public data, in 2018, the global market for machine vision technology used in industrial automation reached US$4.44 billion, and it is expected to reach US$12.29 billion in 2023, with a compound annual growth rate of 21%.

Compared with the global machine vision industry, China's machine vision-related industries started late, but they are developing rapidly. From 2011 to 2019, my country's machine vision market has jumped from 1 billion yuan to 10 billion yuan, maintaining a double-digit growth rate every year. At present, China has become the world's third largest machine vision market after the United States and Japan.

The outbreak of the new crown epidemic in 2020, although it has a certain impact on the entire industry, has become the largest "training ground" for machine vision-drone spraying and disinfection, robot contactless distribution, etc., which has accelerated the public's understanding of In the long run, the cognition of robots will undoubtedly accelerate the development of the machine vision industry. All walks of life will use the functional characteristics of machine vision in a more diversified manner, boosting the overall upgrade of the industry.

Judging from the current industry development, the functional application of machine vision is mainly manifested in four aspects:

Navigation and positioning

For the human eye, navigation and positioning are actually the determination of the relative position and absolute position of the target object through the eyes. For machines, it is necessary to create a pair of "eyes" for them - 3D vision.

When it comes to 3D vision, let’s first talk about the measurement principle of 3D vision. There are currently four mainstream 3D vision measurement technologies on the market: binocular vision, TOF, structured light and laser triangulation.

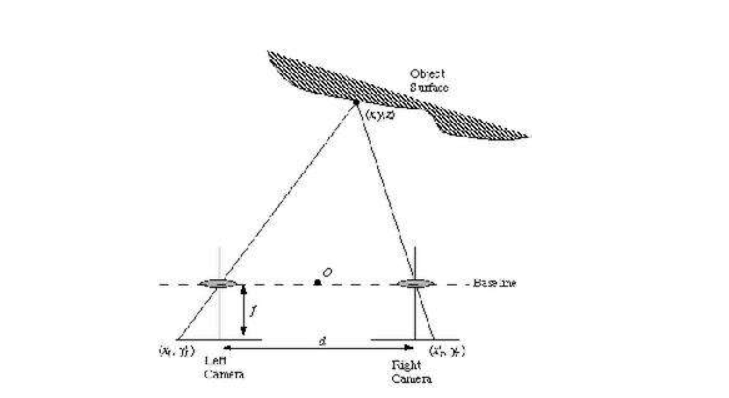

1. Binocular technology is currently a relatively extensive 3D vision system. Its principle is like our two eyes, observing the same scene from two viewpoints to obtain perceptual images from different perspectives, and then through the principle of triangulation. Calculate the parallax of the image to obtain the three-dimensional information of the scene.

Binocular vision has low hardware requirements, so it can control costs to a certain extent. However, it is very sensitive to ambient light. The influence of environmental factors such as changes in lighting angle and light intensity will cause large differences in the brightness of the captured pictures, which undoubtedly increases the requirements for the algorithm. In addition, the camera baseline (the distance between two cameras) limits the measurement range, and the measurement range has a great relationship with the baseline: the larger the baseline, the farther the measurement range is; the smaller the baseline, the closer the measurement range.

The advantages and disadvantages of binocular vision determine that it is more suitable for online, product inspection and quality control on the manufacturing site. Of course, some products also use this technical principle, such as terminal products based on slam algorithm navigation and positioning, somatosensory cameras, etc.

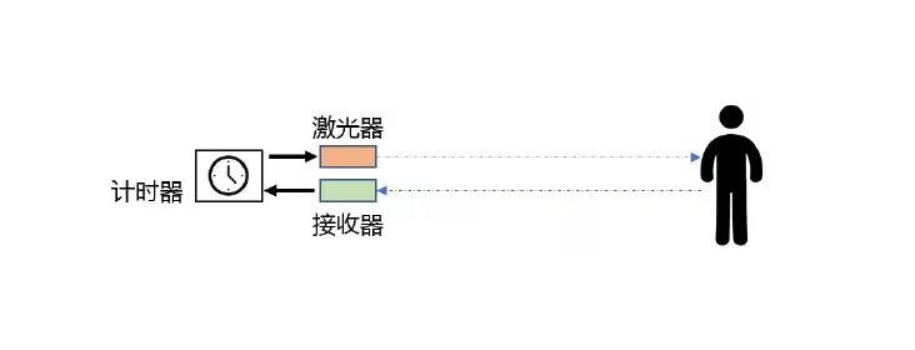

2. TOF (Time Of Flight) imaging technology, its principle is to continuously send light pulses to the target, and then use the sensor to receive the light returned from the object, and obtain the target distance by detecting the flight time of the light pulse.

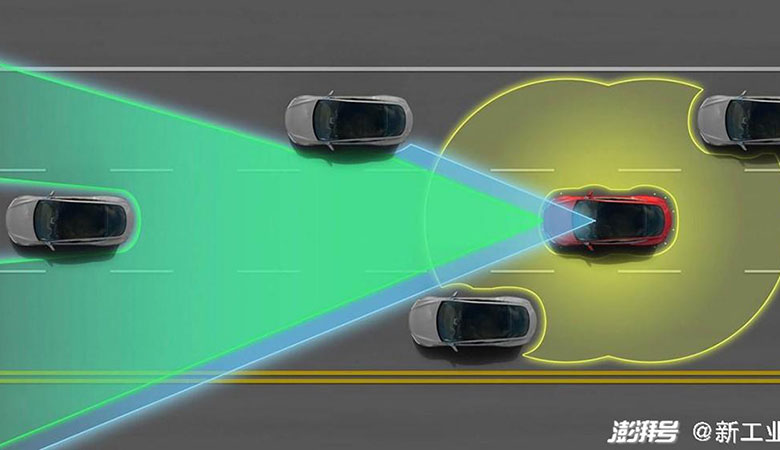

The core components of TOF are the light source and the photosensitive receiving module. It does not need a binocular vision algorithm to calculate, and can directly output the depth information of the object through a specific formula, so it has the characteristics of fast response, simple software, and long recognition distance. Since there is no need to acquire and analyze grayscale images, it is not affected by the surface properties of external light sources. However, the disadvantage of TOF technology is that the resolution is low and cannot be accurately imaged.

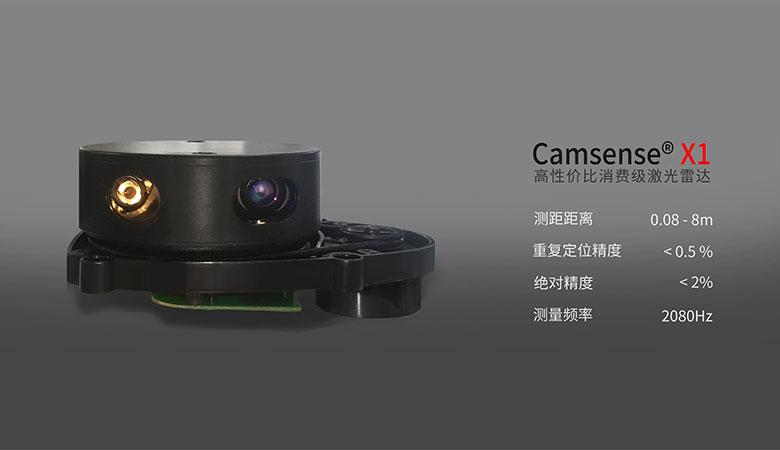

This determines that TOF technology is more suitable for long-distance 3D information collection. The most common one is lidar in the field of autonomous driving. However, due to the development characteristics of the autonomous driving industry, there are not many lidar companies in this field. The share has been occupied by leading companies, and the market threshold for late entrants has become very high. In recent years, with the continuous growth of the market share of sweeping robots in the To C market, many companies are considering using TOF technology to enter the field and get a share of the pie here. But the most important issue in front of TOF technology companies is how to reduce costs, we will wait and see.

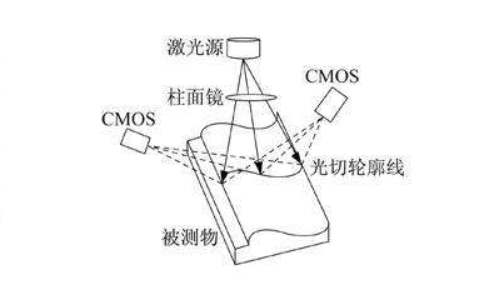

3. Since binocular and TOF have their own shortcomings, there is a third method - structured light technology, which projects a beam of structured light through a light source and hits the object you want to measure, because different Objects have different shapes, which will cause different deformations due to some stripes or spots. After such deformations, it is necessary to calculate the distance, shape, size and other information through algorithms to obtain a three-dimensional image of the object.

Structured light technology does not require a very accurate time delay to measure, but also solves the complexity and robustness of the matching algorithm in the binocular, so it has the advantages of simple calculation and high measurement accuracy, and for low light The environment and surfaces without significant texture and shape changes can also be precisely measured, so more and more industry applications use structured light technology. The most common is the facial recognition of mobile phones.

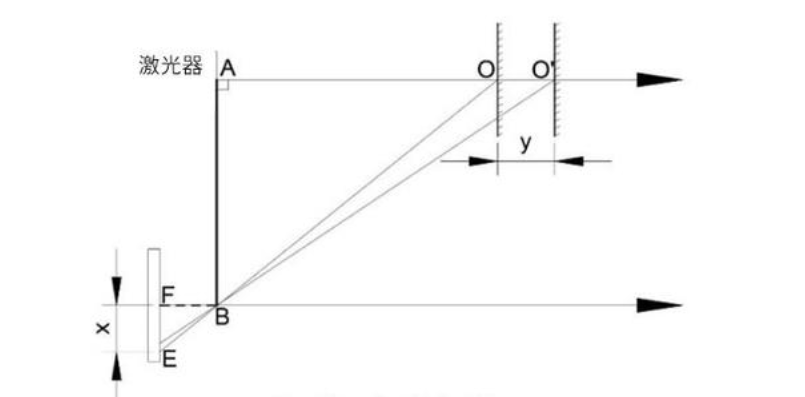

4. Laser triangulation ranging technology is also a geometric measurement method. In essence, it uses the triangular geometric relationship between objects to measure the distance. A typical application is the measurement scheme using laser as the light source, which is also based on the principle of optical geometry and determines the three-dimensional coordinates of each point of the space object according to the geometric imaging relationship between the light source, the object and the detector.

This method usually uses a laser as a light source and a CCD/CMOS camera as a detector. Calculated according to the geometric principle, the closer the measured object is, the greater the position difference on the CCD/CMOS, the higher the resolution and accuracy, so this measurement method has high accuracy at medium and near distances, and is especially suitable for medium and near distances. Distance measurement, so it has become the preferred solution for indoor robot ranging and positioning.

Visual inspection

Appearance inspection is done through image processing technology, which is actually computer processing of image information.

The main purpose of image processing has three aspects: improving the visual quality of the image, extracting some features or special information contained in the image, transforming, coding and compressing the image data to facilitate the storage and transmission of the image. And these are the best applications for checking whether products have quality problems on the production line, and this link is also the most important part of replacing labor.

In addition, it also has applications in other fields, such as generating a preview of the original image, loading a camera on a car or unmanned aerial vehicle, and using image processing technology to process the picture captured by the camera.

Identification

Recognition, as can be seen literally, is a process of recognition and discrimination, which requires the machine to have human-like judgment. Therefore, recognition is mainly achieved through the fusion of image processing and deep learning.

Image processing converts the information of external objects into machine language, and then completes the input of information through visual perception. This process is essentially the process of machine learning. The two are superimposed and merged to make the recognition function intelligent. The most common applications are face recognition and autonomous driving.

High-precision detection

The principle of high-precision detection is actually the same as that of the first navigation and positioning function. The difference is that it may combine multiple measurement technologies to form a high-precision detection system.

Because the industries that use high-precision detection are generally in high-demand industries such as precision processing industries and high-end industrial manufacturing fields, the detection accuracy is required to reach the um level, which cannot be detected by the human eye and must be completed by machines. And this has also become a key application of industrial automation in the era of Industry 4.0.

The in-depth downward trend of artificial intelligence and the Made in China 2025 strategy is bound to bring about a continuous increase in the demand for machine vision.

Machine vision is a technology-intensive industry. The accumulation of core technologies and continuous technological innovation are one of the key factors for companies to gain competitive advantage. We expect more outstanding people in the industry to appear and contribute to the development of the entire industry.